Ever wondered how AI chatbots like ChatGPT hold conversations that feel almost human? Dive into the fascinating world of Large Language Models (LLMs) and discover the secrets behind their text-generating abilities! ✨

Introduction

In today’s fast-paced world of Artificial Intelligence, chatbots are becoming smarter and more capable than ever before. But have you ever considered how they generate such coherent and contextually relevant responses?

Welcome to the realm of Large Language Models (LLMs) — the powerful engines behind modern AI chatbots. In this article, we’ll explore:

- How LLMs generate text

- Enhancing conversational abilities through fine-tuning

- The art of roleplaying with LLMs

- The limitations that shape their capabilities

By the end, you’ll have a deeper understanding of the technology that’s transforming the way we interact with machines.

1. How LLMs Generate Text

The Predictive Power of LLMs

At their core, LLMs like GPT-4, Claude, and Mistral are sophisticated predictors. They work by analyzing vast amounts of text data to understand language patterns.

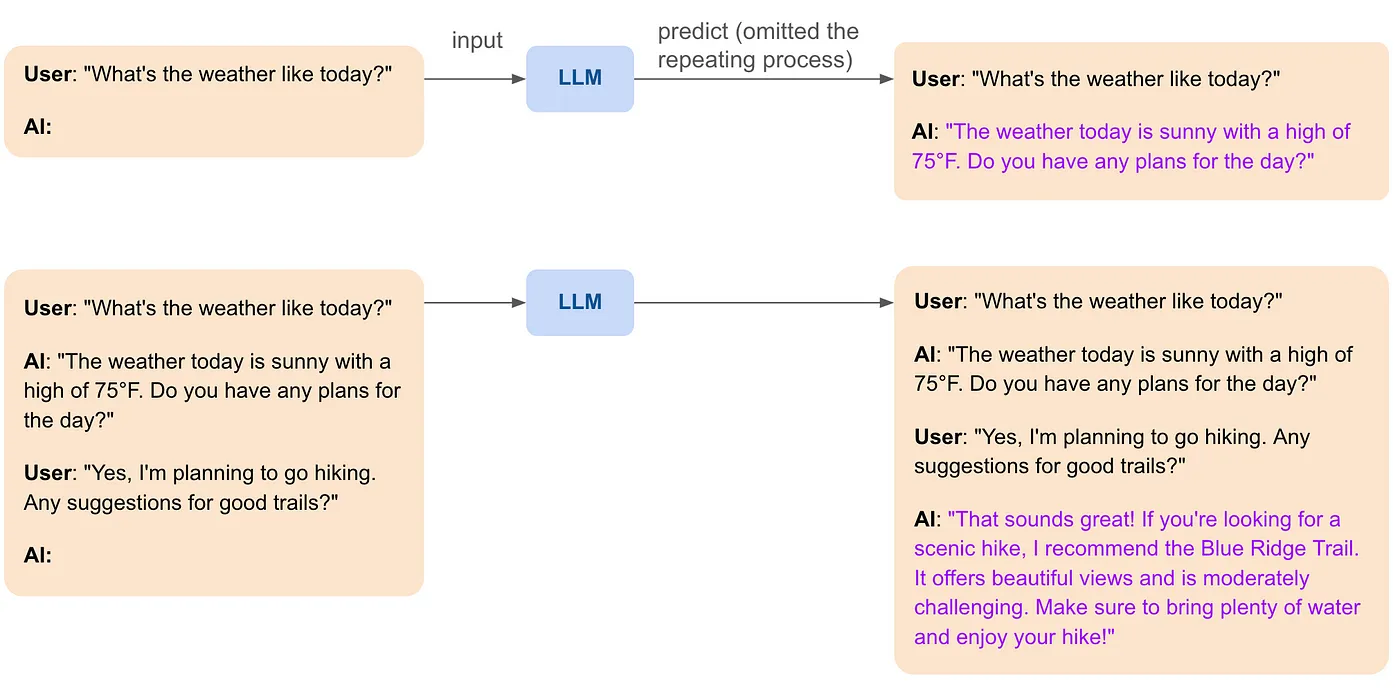

How It Works:

Input Processing: You provide the model with a piece of text called a prompt.

Next-Token Prediction: The model predicts the most probable next word (or token) based on the input.

Iteration: This process repeats, with each new word added to the input, until a complete and coherent response is formed.

Example:

- Prompt: “The quick brown fox”

- LLM Prediction: “jumps”

- Continuation: “over”

- Completion: “the lazy dog.”

The LLM has generated the sentence:

“The quick brown fox jumps over the lazy dog.”

Why Does This Work?

LLMs are trained on diverse datasets containing books, articles, and web pages. This extensive training enables them to capture grammar, context, and even subtle nuances of human language.

2. Enhancing Conversational Abilities

Fine-Tuning for Dialogue

While LLMs are powerful, their out-of-the-box capabilities may not be perfectly tailored for conversational interactions. That’s where fine-tuning comes in.

Note: Fine-tuning involves training the pre-trained LLM on specialized datasets to improve performance on specific tasks, such as holding conversations.

Benefits of Fine-Tuning for Dialogue:

- Relevance: Provides answers that are on-topic and helpful.

- Naturalness: Mimics human-like conversation patterns.

3. Roleplaying with LLMs

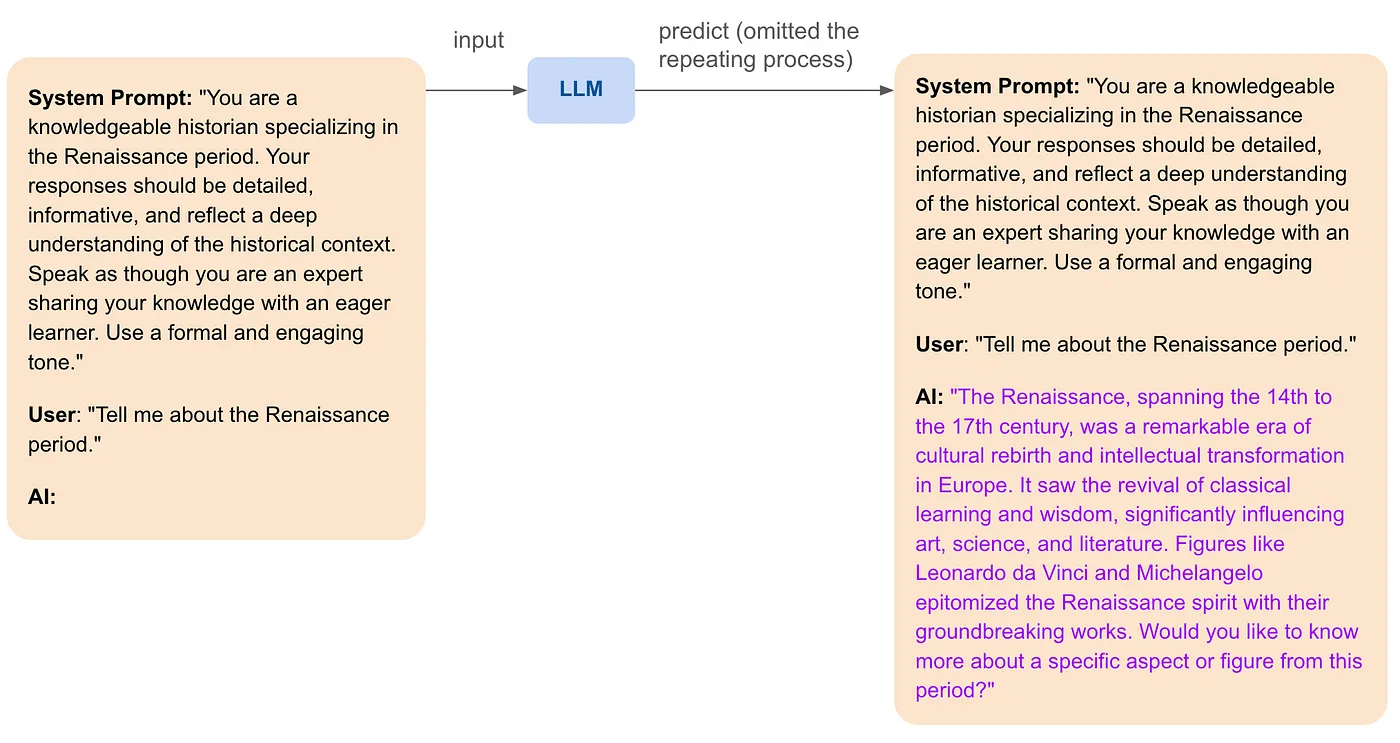

Crafting Personas Through Prompts

One of the most exciting capabilities of LLMs is their ability to roleplay. By carefully designing prompts, you can make the AI adopt specific characteristics, tones, or styles.

How It Works:

- System Prompts: Special instructions that guide the AI’s behavior.

- Role Definition: Specify the persona you want the AI to adopt.

Example Without Roleplaying:

User: “What’s the best way to stay healthy?”

AI: “Eating a balanced diet and exercising regularly are key to staying healthy.”

Example With Roleplaying as a Fitness Coach:

User: “What’s the best way to stay healthy?”

AI: “As a fitness coach, I recommend starting your day with a nutritious breakfast and incorporating at least 30 minutes of exercise daily. Let’s create a plan that fits your lifestyle!”

✨Another Examples:

Applications of Roleplaying

- Customer Service: Acts as a helpful support agent.

- Education: Teaches complex subjects in an accessible way.

- Entertainment: Engages users with creative storytelling.

4. The Limitations of LLMs

While LLMs are impressive, they’re not without limitations. Understanding these helps set realistic expectations.

a. Lack of Memory or State

- No Long-Term Memory: LLMs don’t retain information between sessions unless it’s included in the prompt.

- Implication: They can’t recall previous conversations unless you provide the context each time.

b. Static Intrinsic Knowledge

- Training Data Cutoff: LLMs only know information up to the point they were trained.

- No Real-Time Updates: They can’t access new information or private data unless retrained or connected to external systems.

c. Potential for Inaccuracies

- Hallucinations: May generate plausible-sounding but incorrect or nonsensical answers.

- Biases: Reflect biases present in the training data.

5. Overcoming Limitations: The Path Forward

Understanding the limitations opens doors to innovation. Two key advancements are:

a. Incorporating Memory

- Techniques: Use conversation history in prompts or employ architectures that maintain state.

- Benefit: Creates more coherent and context-aware interactions.

b. Retrieval-Augmented Generation (RAG)

- Concept: Combine LLMs with external knowledge bases.

- How It Works: The AI retrieves relevant information from a database to provide accurate and up-to-date responses.

- Advantage: Allows access to private or recent data without retraining the entire model.

Conclusion

Large Language Models are revolutionizing the way we interact with technology. From generating human-like text to adopting specific personas, their capabilities are vast and continually expanding.

By understanding both their strengths and limitations, we can better harness their potential and innovate solutions like RAG-empowered AI agents that overcome current challenges.

Stay tuned for our next articles where we’ll explore:

The Importance of Memory in AI 🗂️

Embeddings: How AI Understands Text 🔑

Vector Search: Quick and Accurate Information Retrieval ⚡

Join the Conversation

We believe that learning is a two-way street. We encourage you to engage with us:

- 💬 Ask Questions: Visit our LinkedIn Page or leave a comment to share your questions and insights.

- 🤝 Connect with Us: Interested in learning more or discussing how AI can benefit you? Schedule a call with us! We’d love to chat about AI, answer your questions, and explore opportunities together.

- 🧑🤝🧑 Join Our Community: Connect with fellow AI enthusiasts in our Discord Group.

👉 Let’s unlock the potential of AI together!

Empower yourself with knowledge. Let’s democratize GenAI together! ✨

Previously on Epsilla

- Ready to Say Goodbye to Data Chaos? Discover Metadata Filtering for AI Agents on Epsilla Cloud

- How to Build a Financial Analyst AI Chatbot with Epsilla in 3 Easy Steps

About Epsilla

At Epsilla, we’re dedicated to making AI accessible to everyone. Our platform empowers you to build advanced AI Agents tailored to your needs, tapping into private knowledge bases for accurate and relevant interactions.

Feel free to share this article, invite friends, and join us as we demystify the world of AI!