Imagine if machines could understand data just like we do — grasping context, recognizing patterns, and making connections that aren’t explicitly stated. That’s exactly what embeddings and vector search enable AI agents to do. In this article, we’ll dive into these fascinating technologies, explore how they work together, and see how to easily harness their power. By the end, you’ll not only understand these concepts but also see how they set the stage for the next big thing: Retrieval Augmented Generation (RAG).

Introduction

Have you ever wondered how AI assistants understand your queries and provide relevant answers? Or how streaming services seem to know exactly what you want to watch next? The secret sauce behind these smart behaviors lies in embeddings and vector search. Let’s embark on a journey to demystify these concepts and see how they can transform AI agents into more intelligent and context-aware entities.

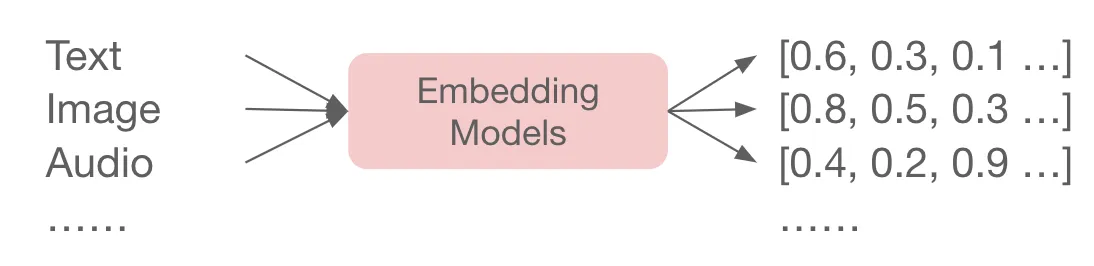

What Is Embedding?

Think of embedding as a way to translate human understandable data — like text, images, or audio — into a language that machines can process. Fundamentally, machines can only process numbers. So instead of dealing with data in their raw data, embedding converts this information into numerical representations called vectors (Yes, the math concept you learned in high school, except here the vectors can have thousands of dimensions.)

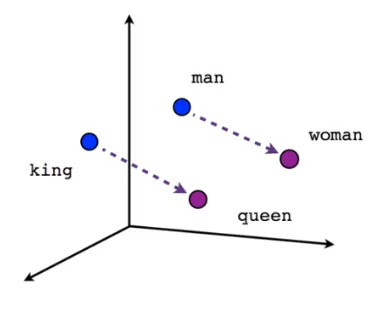

The Math Behind the Scenes

The magic behind the embedding is that it holds a very beautiful math property while converting data into vectors: if we map the vectors into the high dimensional space, each vector represents a high dimensional point in the space. The closer two points are, the more semantically-relevant the data they represent. This works through a sophisticated machine learning model training process, where the embedding model learns to place semantically similar data points close together in high-dimensional space and separate different ones apart.

This mathematical property is crucial because it allows machines to measure how related different pieces of data are by simply calculating distances between their vectors.

The Magic of Vector Search

Now that we have our data transformed into vectors, the next challenge is finding what we need within this vast space. That’s where vector search comes in.

How It Works When you input a query, it’s also converted into a vector, representing the meaning of your question in the same high-dimensional space. The vector search engine then finds vectors in the database that are closest to your query vector, based on their distances. This process is like finding the nearest neighbors in this space, where proximity indicates similarity. The closer a document’s vector is to your query vector, the more relevant that document is to your question. This allows the system to retrieve the most semantically similar results, even if they don’t share the exact words used in your query, making vector search powerful for understanding and matching complex queries.

An Everyday Example

Suppose you search for “best hiking trails near me.” Your query is converted into a vector, and the vector search engine retrieves articles, reviews, or maps that are semantically similar — not just those containing the exact keywords but those discussing hiking experiences, trail recommendations, and so on.

Bringing It Together: How Embeddings and Vector Search Work Hand-in-Hand

Embeddings and vector search are like two sides of the same coin. Embeddings convert data into a numerical form that captures meaning, and vector search leverages that form to find relevant information.

A Story to Illustrate

Imagine you’re at a networking event, and you’re trying to find people interested in AI and machine learning. You can’t go around asking each person directly. Instead, you look for cues — maybe someone is holding a book on neural networks or wearing a T-shirt with a tech logo. Embeddings help the AI “see” these cues in data, and vector search helps “locate” the people you want to talk to.

Why This Matters for AI Agents

So, why should we care about embeddings and vector search? Because they make AI agents smarter and more intuitive.

1️⃣Understanding Context and Nuance

AI agents can grasp the subtle meanings behind words and phrases, leading to more accurate responses.

2️⃣Efficient Information Retrieval

They can sift through vast amounts of data to find exactly what’s relevant, enhancing user experience.

3️⃣Multimodal Capabilities

By representing different types of data uniformly, AI agents can process text, images, audio, and video seamlessly.

Getting Started with Embeddings and Vector Search in Epsilla

Let’s roll up our sleeves and see how you can implement these technologies using Epsilla.

Read more about embedding and data storage.

Real-World Applications and Benefits

The combination of embeddings and vector search unlocks a plethora of possibilities.

1️⃣Enhanced Search Engines

Deliver search results that truly match the user’s intent, improving satisfaction and engagement.

2️⃣Better Recommendations

By understanding the nuances of user preferences, you can offer more accurate product or content suggestions.

3️⃣Advanced Language Processing

Improve translation services, sentiment analysis, and conversational AI by capturing context more effectively.

4️⃣Multimodal Data Integration

Combine insights from text, images, audio, and video for applications like multimedia searches or complex data analyses.

Looking Ahead to Retrieval Augmented Generation (RAG)

Embeddings and vector search are the building blocks for more advanced technologies like Retrieval Augmented Generation (RAG). RAG takes things a step further by integrating external knowledge into AI responses, overcoming limitations like context window size and keeping information up-to-date.

What’s Next?

In our upcoming article, we’ll explore how RAG brings everything together:

- Building a Knowledge Base: We’ll discuss how to chunk your data and create embeddings, referring back to this article for a deeper understanding.

- Real-Time Retrieval: Learn how queries trigger semantic searches, again leveraging the concepts we’ve covered here.

- End-to-End Workflow: We’ll provide a flowchart to visualize how all these components interact, giving you a comprehensive view of the system.

Stay tuned to see how RAG can supercharge your AI agents!

Conclusion

Embeddings and vector search aren’t just technical jargon — they’re powerful tools that make AI agents smarter and more human-like in understanding data. By turning complex information into meaningful numerical representations and efficiently retrieving relevant data, we can build AI solutions that are more responsive, accurate, and versatile.