Welcome to another installment of our “Advanced RAG Optimization” series! If you’ve been following along, you know we’ve been exploring various ways to optimize Retrieval-Augmented Generation (RAG) to make AI interactions more efficient and user-friendly. Today, we’re diving into the fascinating world of query decomposition and how you can leverage it on the Epsilla platform. So grab a cup of your favorite beverage, and let’s get started!

Introduction

In the ever-evolving field of AI, Retrieval-Augmented Generation (RAG) has emerged as a powerful technique that combines the strengths of retrieval systems and generative models. By fetching relevant information and generating contextually appropriate responses, RAG has revolutionized how we interact with AI.

In our previous articles, we discussed:

- Aligning Question and Document Embedding Spaces with HyDE: Introduces HyDE, which uses hypothetical document embeddings to improve semantic alignment between queries and documents, boosting relevance and retrieval efficiency.

- Aligning Question and Document Embedding Spaces with Hypothetical Questions: Covers generating hypothetical queries to align embeddings, bridging semantic gaps between user questions and documents for enhanced accuracy in information retrieval.

- Multi-Perspective Retrieval and Context Enrichment through RAG Fusion: Explores combining multiple retrieval perspectives to enrich AI context, improve relevance, and overcome single-perspective limitations for more insightful, accurate responses.

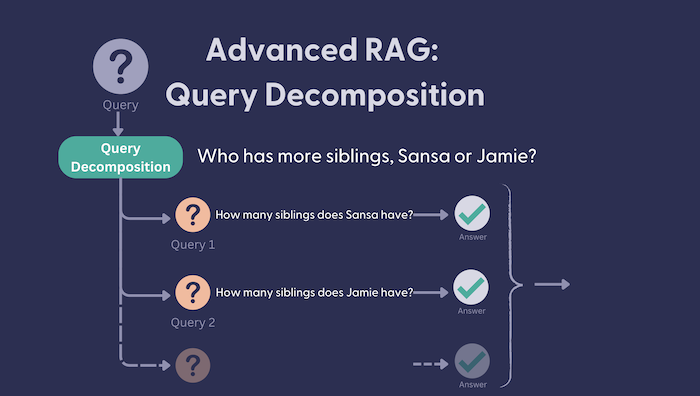

Building upon these concepts, we’re now exploring query decomposition — a strategy that breaks down complex queries into simpler, manageable parts to improve retrieval accuracy and efficiency.

What is Query Decomposition?

Query decomposition is a sophisticated technique in natural language processing and information retrieval that involves breaking down a complex query into simpler, more specific sub-queries. This method enhances the search system’s ability to retrieve relevant information by addressing each aspect of a multifaceted query individually. It ensures that no important detail is overlooked and that each sub-query targets a particular piece of information.

Why is Query Decomposition Important?

Many real-world queries are inherently composite, containing multiple distinct components. For instance, consider a query like: “Find environmentally friendly electric cars with over 300 miles of range under $40,000.” This query encompasses multiple criteria, including:

Cars that are electric.

Cars that are environmentally friendly.

Cars with a range of over 300 miles.

Cars priced under $40,000.

A search system that attempts to retrieve an answer to this query in its entirety may struggle to find documents that address all aspects simultaneously. By decomposing the query into its individual components, the system can independently search for electric cars, environmental certifications, range, and pricing. The results are then combined to provide a comprehensive and relevant answer.

How Does Query Decomposition Work?

The process of query decomposition typically involves several steps:

Parsing the Query: The system analyzes the query’s structure to identify its components, such as key phrases, constraints, and logical operators (e.g., “and,” “or”).

Decomposing into Sub-Queries: Each component is converted into a simpler, independent query.

Running Individual Queries: These sub-queries are sent to the retrieval system, which searches the database or knowledge base for relevant information.

Synthesizing Results: The system aggregates the outputs of the individual sub-queries into a unified, meaningful response, ensuring that it meets all the original query’s requirements.

Why is it Important in RAG?

In RAG systems, the quality of the retrieved information significantly impacts the generated responses. Complex queries can confuse the retrieval system, leading to less accurate or incomplete answers. Query decomposition addresses this by simplifying the retrieval process, ensuring each aspect of the query is thoroughly explored.

Real-World Examples

- Customer Support: A user asks, “How do I reset my password and update my email address?” Decomposing this into two separate queries allows the support system to provide step-by-step instructions for each task.

- E-Commerce: A shopper searches for “waterproof hiking boots under $100 available in size 10.” Breaking this down helps the system filter products more effectively.

Benefits of Query Decomposition

- Improved Accuracy: By focusing on one aspect of the query at a time, the retrieval system can find the most relevant information for each component, leading to a more precise and helpful overall response.

- Efficiency in Data Retrieval: Simplifying queries reduces the computational load on the system. Each decomposed query is less complex, allowing for faster processing and quicker responses.

- Enhanced User Experience: Users receive comprehensive and accurate answers to their questions, improving satisfaction and trust in the AI system.

Achieving Query Decomposition on the Epsilla Platform

Now that we’ve covered the what and why, let’s get hands-on with how you can implement query decomposition using Epsilla agent workflow customization.

Step 1: Query Decomposition

To start, add an LLM Completion Node to your workflow. Configure its system message with instructions like:

Perform query decomposition. Given a user question, break it down into distinct sub questions that you need to answer in order to answer the original question. If the original question is simple enough, response the original question as is. Otherwise, break down the input into a set of sub-problems / sub-questions that can be answers in isolation. Response each sub-problem / sub-question in a separate line.

DON’T INCLUDE A LIST NUBMER OF EACH QUESTION.

DON’T INCLUDE ANYTHING BEFORE OR AFTER THE QUESTIONS.

This ensures the LLM understands how to decompose complex queries. Once the setup is complete, connect the user’s input question to the LLM node. When a question is submitted, the node generates multiple sub-questions, breaking down the input into smaller, actionable parts.

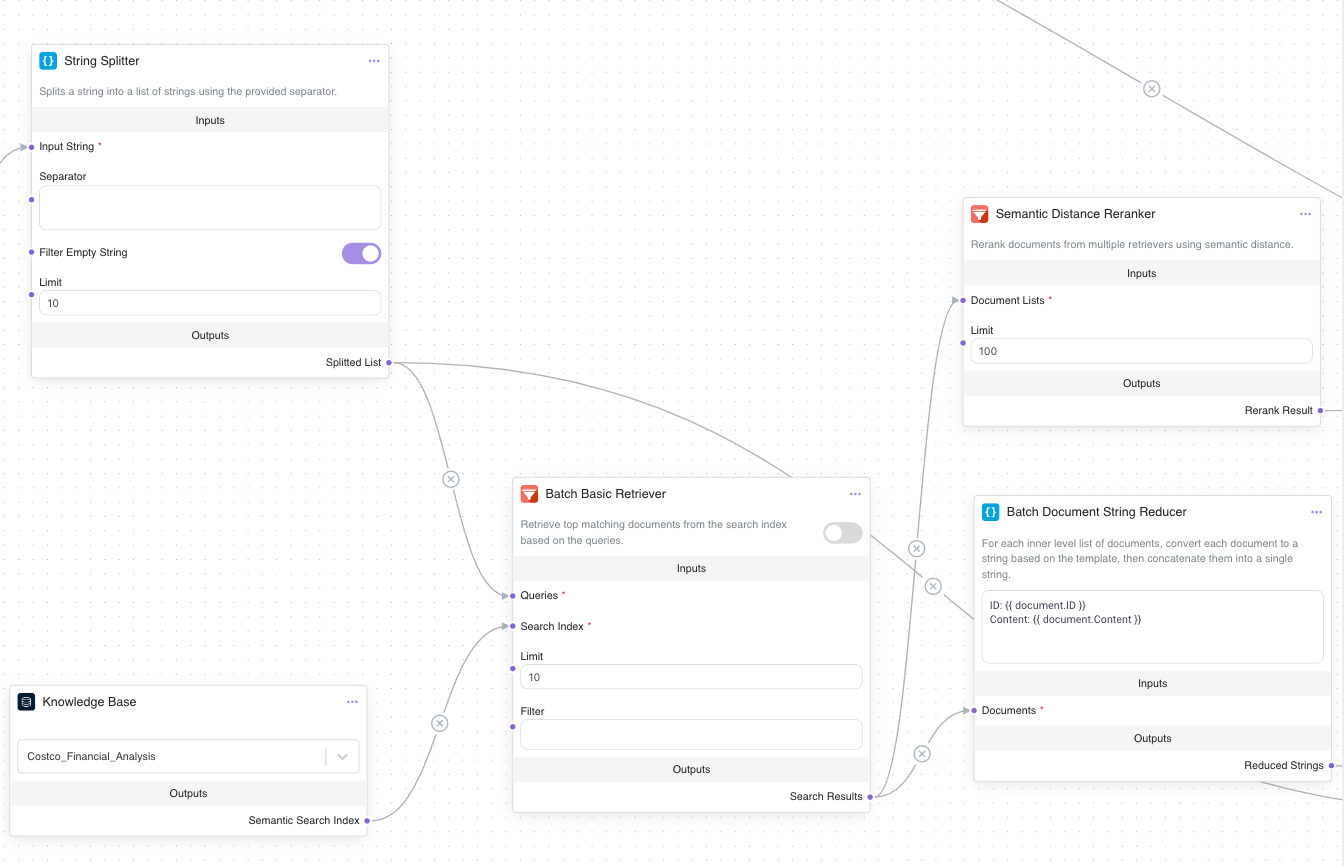

Step 2: Retrieval of Relevant Information

Next, retrieve answers for each sub-question from the relevant knowledge base:

Add a String Splitter Node: Connect it to the LLM Completion Node to split the sub-questions into separate components.

Set Up a Batch Basic Retriever: Link the String Splitter Node to a Batch Basic Retriever Node. Configure the retriever to query a knowledge base, such as “Costco Financial Report,” for relevant documents for each sub-question.

Refine Results with a Document Reducer: Add a Batch Document Reducer Node to consolidate retrieved documents into concise context for each sub-question, outputting a list of strings.

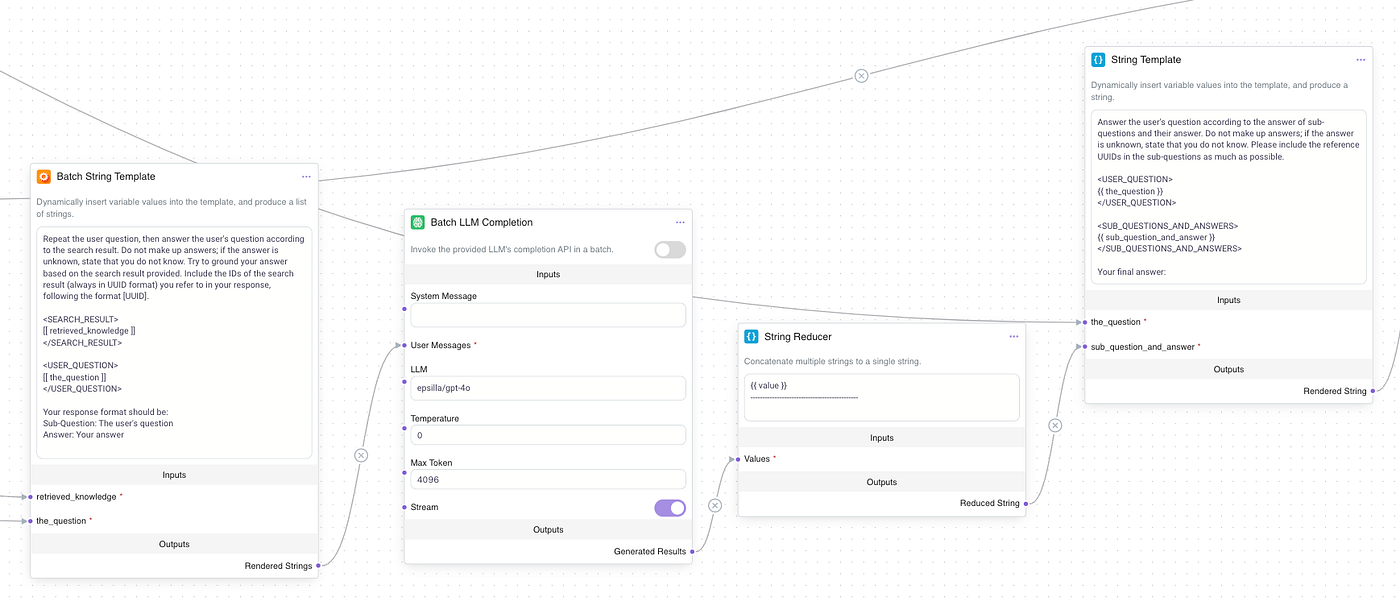

Step 3: Tackling the Sub-questions

Use a Batch LLM Completion Node to resolve the sub-questions one by one. Use the prompt to have the LLM repeat the sub-question and provide an answer. Then, use a String Reducer Node to combine the outputs into a single string.

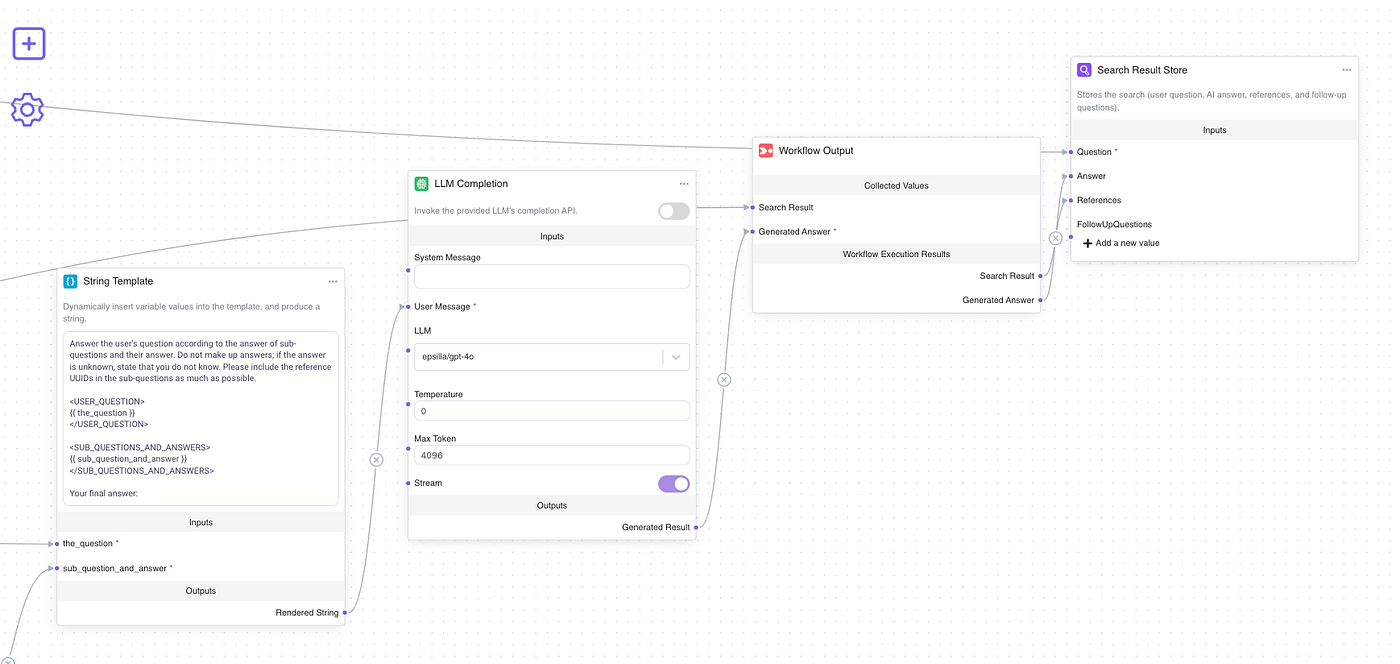

Step 4: Synthesizing the Final Answer

After retrieving answers for all sub-questions, synthesize them into a cohesive response:

Format the Final Prompt: Add a String Template Node and set its instructions to:

Answer the user’s question according to the answer of sub-questions and their answer. Do not make up answers; if the answer is unknown, state that you do not know. Please include the reference UUIDs in the sub-questions as much as possible.

{{ the_question }}

{{ sub_question_and_answer }}

Your final answer:

Finalize the Output: Use a final LLM Completion Node to polish the response. Connect this node to the String Template Node, then link the result to the Workflow Output Node for display.

Case Study: The Impact of Query Decomposition on Financial Report Analysis

To illustrate the transformative power of query decomposition, we conducted a comparison using two search agents on Costco’s financial reports. One agent operated without query decomposition, directly handling the complex query as a single unit. The other employed query decomposition, breaking down the query into smaller, more manageable sub-questions. Below are the results, highlighting the difference in clarity and detail between the two approaches.

The Query

“With Costco’s membership model contributing significantly to its recurring revenue stream, how does the company leverage membership renewals and Executive memberships to enhance customer loyalty and profitability?”

The Result

Key Differences

The agent using query decomposition provides a far more informative and actionable answer by thoroughly analyzing different aspects of the query, while the non-decomposed approach remains high-level and less helpful. This highlights the importance of decomposition in improving response quality and clarity.

Conclusion

Query decomposition is a transformative technique in the realm of Retrieval-Augmented Generation (RAG) systems, addressing the challenges of handling complex, multi-faceted queries. By systematically breaking down intricate questions into smaller, focused sub-queries, it not only enhances retrieval accuracy but also ensures that each aspect of the query is thoroughly explored. This process enables AI systems to generate responses that are both precise and comprehensive, leading to more reliable and user-friendly outcomes.

As the demand for intelligent and context-aware AI solutions continues to grow, query decomposition stands out as an essential optimization strategy for improving system performance. Whether you’re designing advanced search engines, conversational agents, or knowledge retrieval tools, mastering query decomposition can unlock new levels of efficiency and relevance.

In our next articles, we’ll dive deeper into more advanced RAG techniques and real-world implementations to further enhance your AI systems. Stay tuned for more insights and actionable strategies to take your RAG workflows to the next level!